Hello and welcome to the first issue of the #dbtips digest! In this digest, I will share short tips and quick news about dbt and analytics engineering.

I created this format because not all tips can be full-fledged posts, and it's difficult to include news in such posts. So, the digest format provides a solution.

In today's digest, I will discuss the new version of dbt core, provide a couple of tips about documentation (based on feedback from previous posts), and share a few dbt commands that I find useful in my work. Let's get started!

dbt Core 1.7

On November 2, dbt Labs released a new version of their dbt Core package (v1.7). In my opinion, most of the changes in this new major version are related to the MetricFlow functionality and data contracts (really hot topics!), although some other useful features were also added. Let's discuss the most interesting changes.

Since dbt 1.6, dbt natively supports MetricFlow, which is the successor of the dbt-metrics package. MetricFlow is a tool that can generate SQL from metric configurations. In other terms, it is a Semantic Layer. In the new 1.7 version, dbt continues to enhance its functionality by adding and extending a list of features. If you want to better understand what a Semantic Layer is and how it is implemented through MetricFlow, I recommend reading this article from the official documentation. It provides a good quick start.

Command dbt docs generate now supports the --select syntax. This allows you to generate documentation only for a subset of models. This feature is particularly useful for large projects when you only need to cover a portion of your project with HTML documentation. As I understand, this is also a feature needed for their dbt mesh vision.

The freshness command can now automatically detect the loaded_at_field, eliminating the need for manual configuration. However, this feature currently only works in Snowflake, so users of other adapters will need to wait for support.

There have been several changes to dbt contracts. These changes include stricter policies for model versioning and column data types, as well as access configuration for models and whole folders. If you use data contracts, you will find these changes useful.

As a bonus, the dbt-spine macro is now included in dbt Core. Additionally, you can now set a delimiter for seeds.

Using assets in documentation

After my post about improving dbt documentation, several people have asked how to display a custom logo on the main page. Here is a step-by-step instruction.

Step 1. Create a new folder called /assets inside your dbt folder. This folder can hold any assets you want to show in the documentation. For example, you can place your logo and other useful images there.

Step 2. Configure dbt to support this folder. You can do this by adding the following line to your dbt_project.yml file:

asset-paths: ["assets"]

Step 3. Use images from this folder in your markdown. To do that, simply use the following syntax:

Now use can create the most awesome dbt docs ever! 🙂

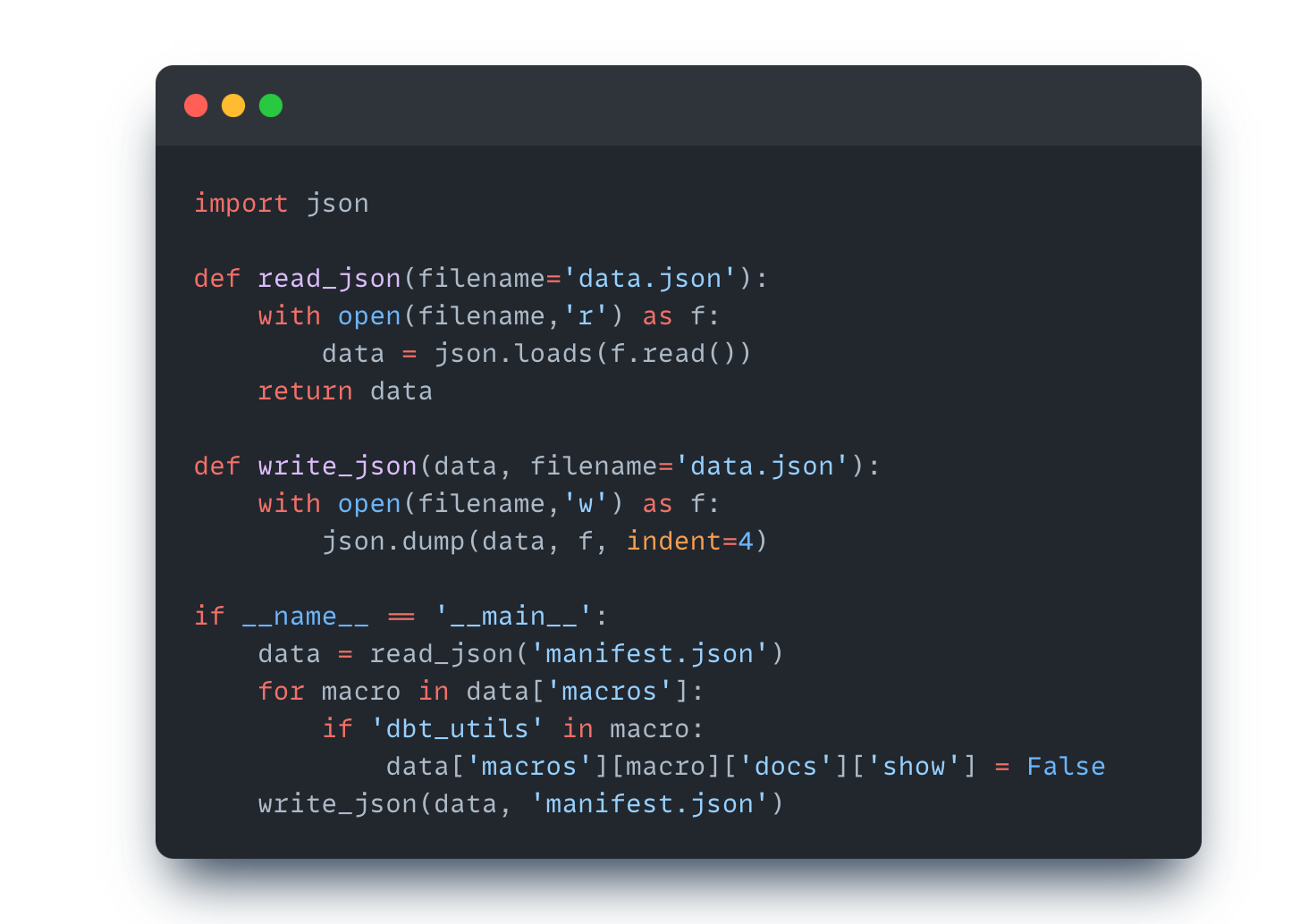

Completely hiding packages from docs

Another question that several people have asked is how to completely hide dbt packages from the documentation. The issue is that if the package contains macros, it will still be visible in the documentation.

Since there is no standard solution, we will need to use some programming to achieve this. Specifically, we need to modify the manifest.json file and explicitly set the visibility to “False” for any external dbt packages.

For example, you can use the following Python script to accomplish this. It reads the content of the file, toggles the visibility of “dbt_utils” package (as example), and saves the changes:

This solution is certainly technical, but it provides a workaround. Feel free to use it as needed.

Testing macros

Finally, this trick I discovered recently when I was creating a new dbt macro. Macros can be difficult to debug because you have to use them in the model and constantly re-compile the model to see the end result. However, I found a workaround for this issue.

If you want to compile the macro itself, starting from dbt 1.5, you can use the compile command with the inline flag:

dbt compile --inline "{{ my_macro('param1', ...) }}"

This will print a compiled code of the macro to the terminal.

If you prefer to use SQL, simply write an inline SQL query. If that seems inconvenient, you can create an analysis file. Then, you can compile the analysis file as a usual model using select syntax.

dbt compile --select my_analysis_file

Or even better, you can call it with dbt show to preview the results itself:

dbt show --select my_analysis_file

Hopefully this will make your life easier!

I hope you liked this new format of content! 🔥

Let me know in the comments what do you think! 🤔

If you liked this issue please subscribe and share it with your colleagues, this greatly helps me developing this newsletter!

See you next time 👋

Hi, thank you for your clear and easy-to-follow guides!

I was wondering if you have found any ways to resize the images in the dbt docs?

Without luck, I tried the following:

<img src="./assets/logo.png" alt="logo" width="200"/>

and

<div style="text-align: center;">

<img src="./assets/logo.png" alt="logo" style="width: 200px;">

</div>

and

{ width=200 }